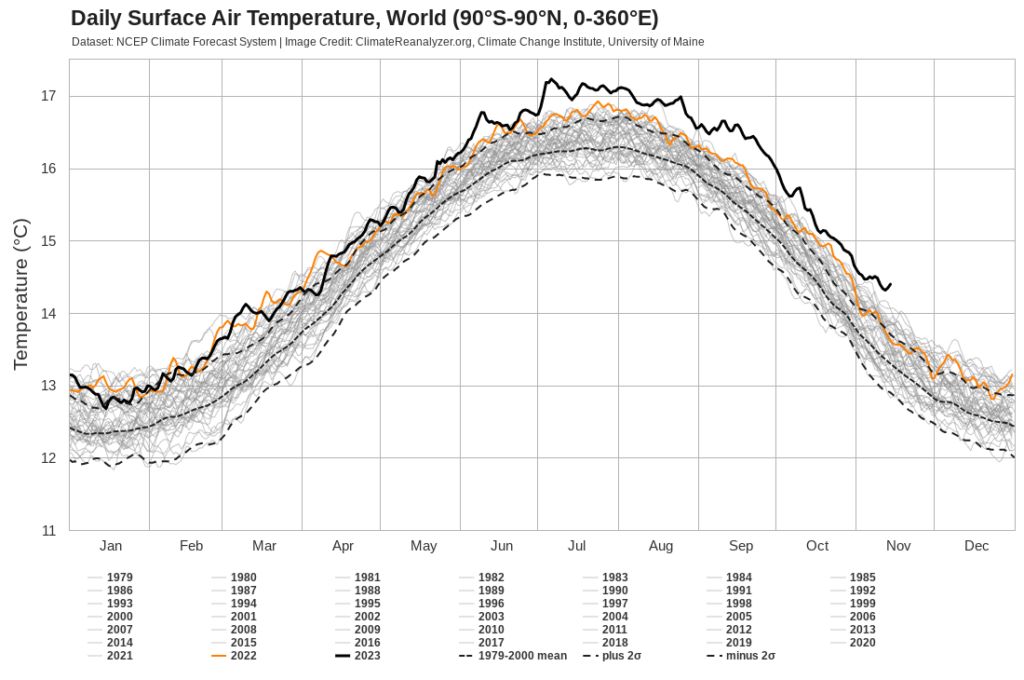

UPDATED: Now includes data to Nov 14 2023

06 July 2023 had the highest ever Global Average 2m (that’s metre for folks who use Freedom Units) Temperature at 17.23C (that’s Celsius).

Gonna be a fun year!

UPDATED: Now includes data to Nov 14 2023

06 July 2023 had the highest ever Global Average 2m (that’s metre for folks who use Freedom Units) Temperature at 17.23C (that’s Celsius).

Gonna be a fun year!

[NOTE: This post is restored from the Wayback Machine. It was initially published December 22 2016 and lost during a database transfer sometime in the past. ]

I can’t remember the exact year, but I know it was in the late 1980s, when I got my first Patagonia Catalogue (I am Canadian after all). It opened my eyes to some amazing outdoor adventures, as well as introducing me to the history of the company – there was a long company history article among the pages.

The product I remember the most from the catalogue? The Ironworker Climbing Pants. The concept of these have stuck with me for nearly 30 years. Pants so tough that they could survive the abuse of an ironworker and a climber on Half Dome.

But also remember the crazy sailing and fishing products that they had. It impressed me that the people who worked for Patagonia and designed the products weren’t just crazy stonewallers, but wanted to be a part of the outdoors, no matter where the outdoors were.

I have never owned anything from Patagonia. My kids wore Patagonia, when they were younger, as we had a fantastic Goodwill store when we lived in San Mateo and people were dropping off some amazing stuff during the crazy years of the boom.

As I have gotten older and more sedentary, I likely can’t fit into any of their products with my spreading middle-aged frame. I could buy some knock-off or one of the amazing brands that has appeared in the intervening years (I see The North Face everywhere right now – is this a hot brand or just better marketing?).

But this has not stopped my love of (and lust for) Patagonia products. Why would I desire something I could never get into or have any need for?

For the same reason I appreciate anything: the love Patagonia puts into their designs, the simplicity of their complexity, and the pride people have who wear their products not just as a fashion statement, but because they understand what Patagonia stands for.

This blog has been around for a long time, moved several times (both in hardware and physical locations), been ignored, and has become broken.

Since the start of the month (April 2022) I have been restoring the links and images on this blog from the Internet Archive’s Wayback Machine. If you didn’t want it out there anymore, the Wayback Machine will find it.

It will likely take time to restore the glory that once was the Newest Industry blog, and, yes, some posts will be removed, but it’s coming back.

In 2002, I was invited to speak at an IBM conference in San Francisco. When it came time to give my presentation, no one showed up.

I had forgotten about it until I was perusing the Wayback Machine and found the PDF of my old presentation.

The interesting thing is that the discussion in this doc is still relevant, even though the web is a very different beast than it was in 2002. Caching headers and their deployment have not changed much since they were introduced.

And there are still entities out there who get them wrong.

If you like ancient web docs, check out what webperformance.org looked like in 2007. [Courtesy of the Wayback Machine]

One of my favorite places to get Covid stats from is the Our World In Data data explorer. They aggregate all the stats into a number of great visualizations that you can share with friends.

In this data are nuggets of information that get lost when you are surrounded by North American media. For example, did you in North America know that France and Germany are centers of a new European Covid wave in April 2022?

In this cascade of data are some interesting signs of how our world really uses and abuses information. The NY Times reported on some of the weird Covid data emerging from China (Shanghai’s Low Covid Death Toll Revives Questions About China’s Numbers). The Our World In Data charts show just how unusual this information is.

While I believe that the methods used to control Covid in China are aggressive, they cannot be this successful. Full stop. The case counts are far lower than anywhere else in the world and the confirmed deaths are, well, remarkably low.

Unbelievably low, upon sober thought.

The battle that democratic India is waging to control the release of statistical models of their actual mortality rate change during Covid (India Is Stalling the W.H.O.’s Efforts to Make Global Covid Death Toll Public) shines a brighter light on a country with tighter controls that is able to bury the actual mortality rate more effectively.

Throughout the Pandemic, there have been two battles: one to control the disease; the other to control the facts about the disease. In North America, the disinformation campaign has been incredibly strong; in China, it pales besides the no information campaign

Taking this data, anyone can shape a narrative that reflects their world view. But what narrative can you shape about no data?

This blog used to have a different name, but a few years ago, I let the registration of the domain lapse and someone else snapped it up. It was based around a Hüsker Dü song from Zen Arcade.

I’ve been listening to that album a lot lately, and this song keeps standing out as a timeless reminder of what we will do to ourselves if things get out of hand.

Listen carefully; these lyrics from 1982/3 still have a deep meaning.

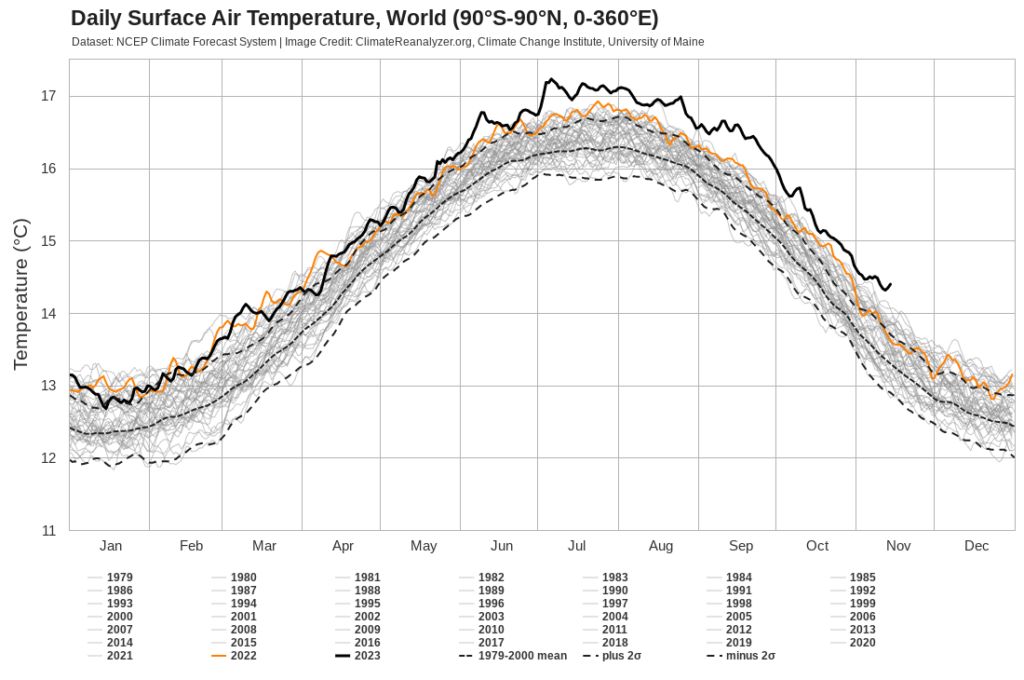

I have a thing for re-using old hardware for server equipment. This is odd given that the ease in deploying apps/sites/cat pictures on shiny cloud services, but I am old school and prefer to be able to put my hands on the devices that serve my stuff.

Currently, the 2008 First Generation Aluminum MacBook is running Ubuntu Server and delivering the content you are looking at. Previously, it had been hosted on one of the Raspberry Pi 3b+ machines you see in the background, but I figured it was time for an “upgrade”. I have an old Dell desktop machine under my desk that I may repurpose to run the upgraded version of Ubuntu LTS, but that is a project for the summer.

In the past, back when we lived in Massachusetts, I had a hodgepodge rack of devices ranging from ancient Dell desktops, old server machines, and a bunch of hopes and dreams.

At least with the new setup, I don’t have to worry about there being an inch of water in the basement after a heavy rain.

There have been stories around for the last month that suddenly make “old” hardware shiny again – install ChromeOS Flex! Well, that’s not the only use that old machines have.

Servers can run on just about any platform. Even if it’s just a local DNS or MX server, it doesn’t need to go on the trash heap.

It’s not just you who can benefit from recycling or donating still working computers and equipment. Not everyone has access to the best and the shiniest, but that may not be what they need. Giving a family an old laptop that can run ChromeOS Flex may make it easier to raise them above the digital poverty line. That old iPhone or Android Device that you aren’t using could make it easier for a family to stay in touch.

If you aren’t using it anymore, donate or recycle it appropriately. Info on donating and recycling your old hardware is available here.

Old to you may be amazing to someone else.

Google’s Core Web Vitals initiative has become a larger part of discussions that we have with customers as they begin setting new performance KPIs for 2021-2022. These conversations center on the values generated by Lighthouse, WebPageTest, and Performance Insights testing, as well as the cumulative data collected by CrUX and Akamai mPulse and how to use the collected information to “improve” these numbers.

Google has delayed the implementation of Core Web Vitals into the Page Rank system twice. The initial rollout was scheduled for 2020, but that was delayed as the initial disruption caused by the pandemic saw many sites halt all innovation and improvement efforts until the challenges of a remote work environment could be overcome. The next target date was set for May 2021, but that has been pushed back to June 2021, with a phase-in period that will last until August 2021

Why the emphasis on improving the Core Web Vitals values? The simple reason is that these values will now be used as a factor in the Google Page Rank algorithm. Any input that modifies an organization’s SEO efforts immediately draws a great deal of attention as these rankings can have a measurable effect on revenue and engagement, depending on the customer.

While conversations may start with the simple request from customers for guidance around what they can do to improve their Core Web Vitals metrics, what may be missed in these conversations is a discussion of the wider context of what the Core Web Vitals metrics represent.

The best place is to define what the Core Web Vitals are (done by Google) and how the data is collected. The criteria for gathering Core Web Vitals in mPulse is:

Visitors who engage with the site and generate Page View or SPA Hard pages and are using recent versions of Chromium-based browsers.

However, there is a separate definition, the one that affects the Page Rank algorithm. For Page Rank data, the collection criteria gets a substantial refinement:

Visitors who engage with the site and generate Page View or SPA Hard pages who (it is assumed) originated from search engine results (preferably generated by Google) and are using the Chrome and Chrome Mobile browsers.

There are a number of caveats in both those statements! When described this way, customers may start to ask how relevant these metrics are for driving real-world performance initiatives and whether improving Core Web Vitals metrics will actually drive improvement in business KPIs like conversion, engagement, and revenue.

During conversations with customer, it is also critical to highlight the notable omissions in the collection of Core Web Vitals metrics. Some of these may cause customers to be even more cautious about applying this data.

Google is, however, listening to feedback on the collection of Core Web Vitals. Already there have been changes to the Cumulative Layout Shift (CLS) collection methodology that allow it to more accurately reflect long-running pages and SPA sites. This does lead to some optimism that the collection of Core Web Vitals data may evolve over time so that it includes a far broader subset of browsers and customer experiences, reflecting the true reality and complexity of customer interactions on the modern web application.

Exposing the Core Web Vitals metrics to a wider performance audience will lead to customer questions about web performance professionals are using this information to shape performance strategies. Overall, the recommendation thus far is to approach this data with caution and emphasize the current focus these metrics have (affecting Page Rank results), the limitations that exist in the data collection (limited browser support, lack of SPA Soft Navigations, mobile data only from Android), and the lack of substantial verification that improving Core Web Vitals has a quantifiable positive effect on business KPIs.

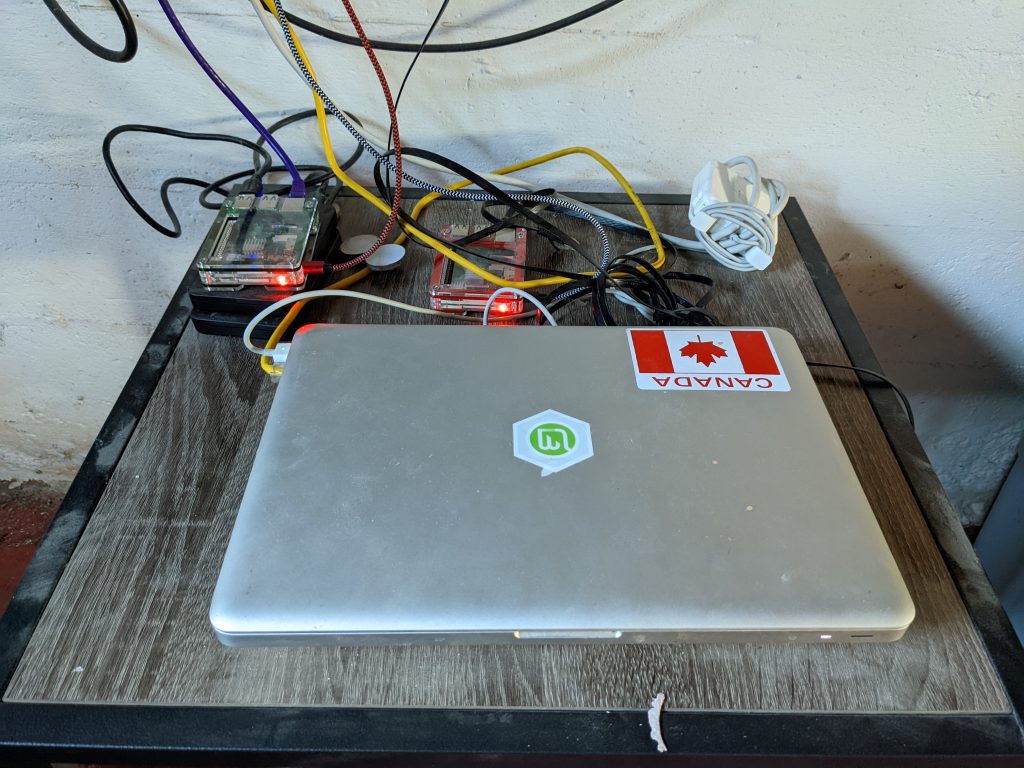

When working with customers in Europe or who serve their data in the US and Europe, I am stunned when they ask why their performance is so much slower in certain European countries compared to the US.

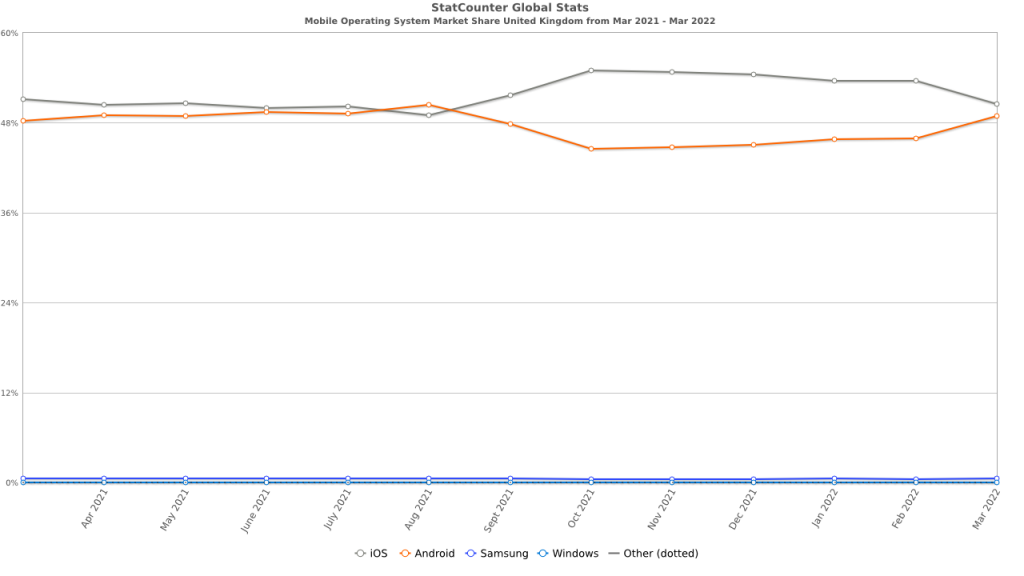

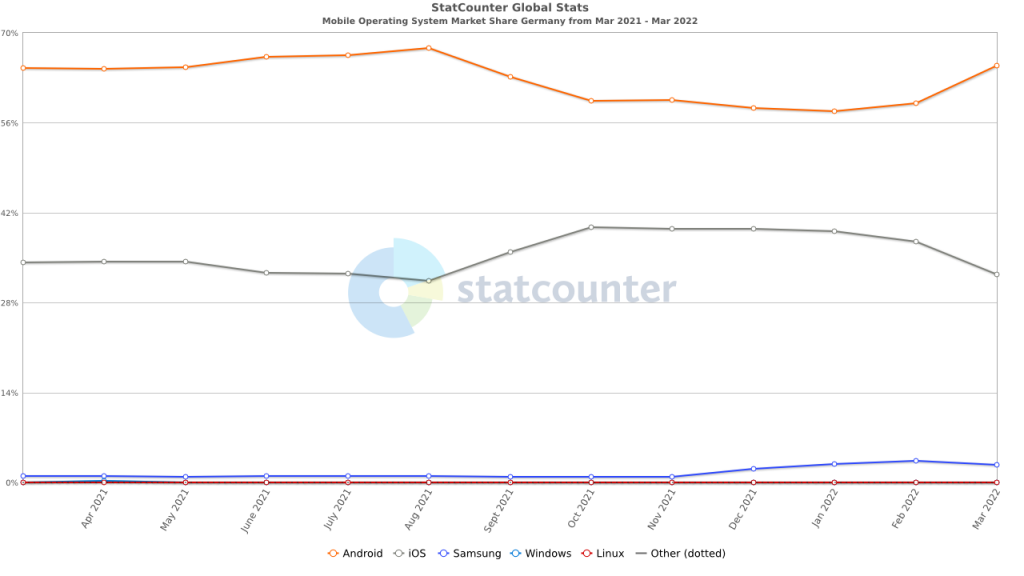

Glimpsing at some publicly available stats (thank you Statcounter!), the reason is clear: Android is the dominant Mobile platform in Europe.

This doesn’t hold true everywhere in Europe – in the UK (yes, it is still in Europe!), Android and iOS have nearly equal Mobile device market share.

But in Germany, the divide is vast, with Android clearly dominating the playing field.

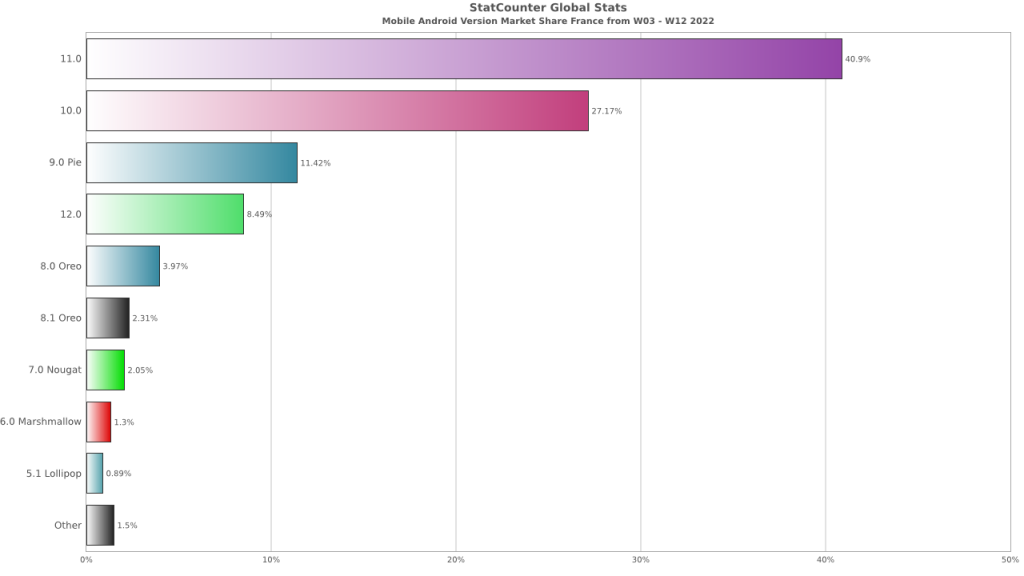

But there is yet another divide that is greater than even the Android/iOS split – the Android version difference. Depending on the country, your performance could be dependent entirely upon the versions of Android that the visitors use.

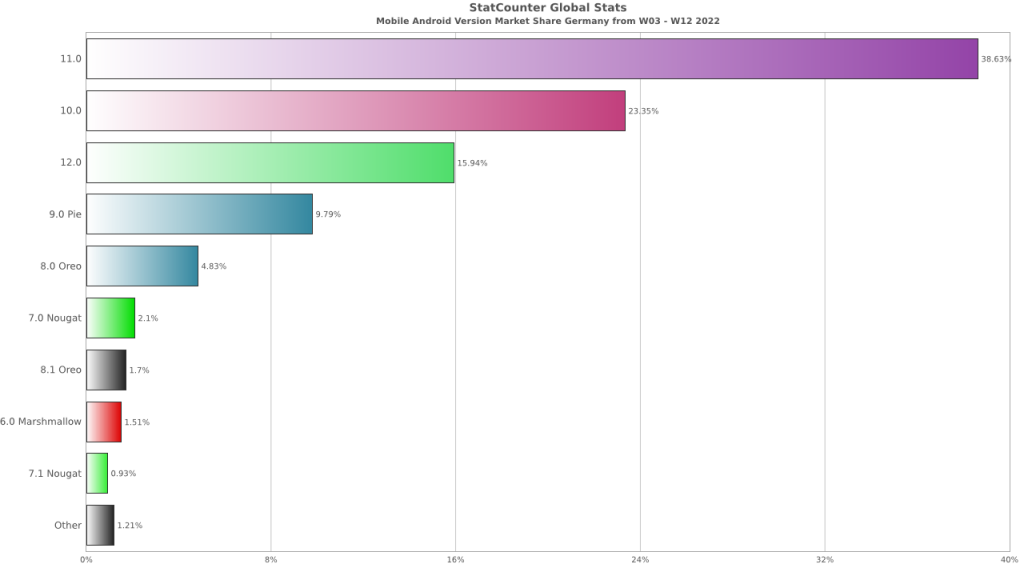

Starting with Germany in Q1 2022, Android usage is dominated by the three latest OS versions: 10, 11, and 12.

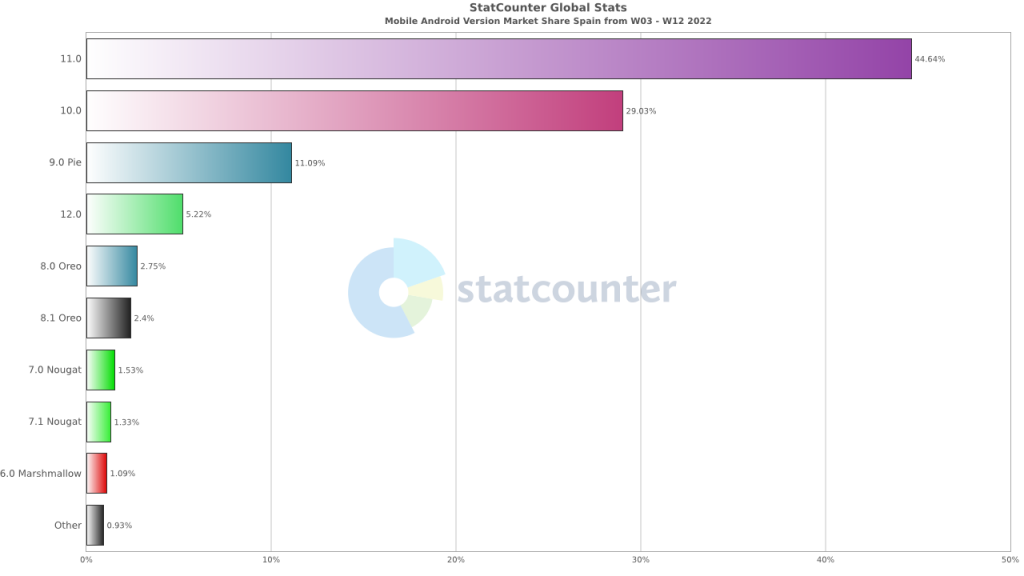

But in countries like Spain and France, Android/9 is still a prevalent OS. This version of Android runs on much older hardware and is more likely to experience degraded performance compared to those running 11 or 12.

For teams trying to design performant web sites, this is a critical piece of information. While Mobile is the dominant platform, sites need to be designed to deliver excellent user experiences for all visitors. And in Europe, this means Android users.

What can you do?

And, most importantly, use an Android device. If you make your development team use Android for a week, they might get the message that they need to do more to reach into this neglected Mobile population.

If you’re a browser geek like I am, check out this post I wrote in 2009 about the browser stats. The world is a very different place now.

The latest trend A key tool in web performance measurement is the drive to implement the use of Real User Measurement (RUM) in a web performance measurement strategy. As someone who cut their teeth on synthetic measurements using distributed robots and repeatable scripts, it took me a long time to see the light of RUM, but I am now a complete convert – I understand that the richness and completeness of RUM provides data that I was blocked from seeing with synthetic data.

[UPDATE: I work for Akamai focusing on the mPulse RUM tool.]

The key for organizations is realizing that RUM is complementary to Synthetic Measurements. The two work together when identifying and solving tricky external web performance issues that can be missed by using a single measurement perspective.

The best way to adopt RUM is to use the dimensions already in place to segment and analyze visitors in traditional web analytics tools. The time and effort used in this effort can inform RUM configuration by determining:

This information needs to bleed through so that it can be linked directly to the components of the infrastructure and codebase that were used when the customer made their visit. Limiting this data pool to the identification and solving of infrastructure, application, and operations issues isolates the information from a potentially huge population of hungry RUM consumers – the business side of any organization.

The Business users who fed their web analytics data into the setup of RUM need to see the benefit of their efforts. Sharing RUM with the teams that use web analytics and aligning the two strategies, companies can directly tie detailed performance data to existing customer analytics. With this combination, they can begin to truly understand the effects of A/B testing, marketing campaigns, and performance changes on business success and health. But business users need a different language to understand the data that web performance professionals consume so naturally.

I don’t know what the language is, but developing it means taking the data into business teams and seeing how it works for them. What companies will find is that the data used by one group won’t be the same as for the other, but there will be enough shared characteristics to allow the group to share a dialect of performance when speaking to each other.

This new audience presents the challenge of clearly presenting the data in a form that is easily consumed by business teams alongside existing analytics data. Providing yet another tool or interface will not drive adoption. Adoption will be driven be attaching RUM to the multi-billion dollar analytics industry so that the value of these critical metrics is easily understood by and made actionable to the business side of any organization.

So, as the proponents of RUM in web performance, the question we need to ask is not “Should we do this?”, but rather “Why aren’t we doing this already?”.

Copyright © 2024 Performance Zen

Theme by Anders Noren — Up ↑